Every few weeks, my Facebook newsfeed throws me an article like “Most Livable Cities” or “Best Cities for Quality of Life”or “Happiest and Unhappiest U.S. Cities” or somesuch. These rankings are generally quite different (though with a few common themes), and often include — in the top ten or so — the home city of whoever shared the link with their fellow facefriends.

The rankings vary widely in source, methodology, and credibility (for that matter, even in use of supporting data). So I was curious to do a sort of meta-analysis, combining these lists in a reasonable way to see (1) what cities are most livable by consensus, and (2) what social/demographic indicators seem to make them that way. Here are the main things I learned:

- The livability of a city isn’t related to the happiness of its people.

- Livability rankings comes in two types, which I call Chill Rankings and Jetsetter Rankings. The statistical models of livability that they produce are totally different, and there is no overlap in their top ten cities.

- A few cities crack the top 25 for both types, though, suggesting a more balanced lifestyle: Washington DC, Boston, San Francisco, Pittsburgh, Minneapolis, Seattle, Buffalo, Honolulu, Portland, and Houston.

- Surprisingly, cost of living and pollution have little relationship with livability in either type of ranking.

The Experiment

In full disclosure, this was originally just an excuse to play around with structural equation modeling (more below). But I also wanted to take an inductive approach to livability — to blenderize all these contradictory lists and try to learn something from them. The typical approach seems wantonly deductive to me — e.g., rank cities by average rent, household income, commute time, and violent crimes per capita using census data, and then sum those rankings into an overall score. Nevermind that rent and income are strongly correlated (and effectively double-counted), or that crime should maybe count for more than commute time. Some of these rankings come from studies by scientists who know how to deal with these complexities, but many are compiled by journalists (or their interns) based on intuition alone.

Structural Equation Models (SEMs)

I wanted to design the meta-analysis around SEMs, which I discovered from papers on social influence in online communities. SEMs were first articulated by Sewall Wright, a geneticist at my alma mater UW-Madison… and apparently a frequent dinner guest at my band‘s singer’s mother’s childhood home. (Small world!) But my training as a computer scientist did not include SEMs in school or any formal setting. I’ve been eager to learn more and find an interesting application.

SEMs are graphical models with the sweet ability to create hypothetical latent variables and uncover statistical relationships between them. For example, there isn’t really a way to measure livability, precisely, but we can tap into so-called “manifest variables” — like these Facebook-flung livability lists — to create a “latent construct” that summarizes them all. The idea is that this construct represents the “true but hidden” livability measure, and all these rankings are “symptomatic” manifestations of the underlying scale. The same can be done for for cost of living (a construct of the average rent, price of gas, cost of a slice of pizza, etc.) or education (a construct of the percentage of residents with various degrees). We can also essentially perform a regression analysis to see how constructs like cost of living and education might influence livability.

Model and Data

The figure below illustrates my basic model, which incorporates a lot of the general assumptions about what influences livability:

All these variables are latent constructs. To create them, I first needed manifest variables, so I wrote some scripts to download and munge a buttload of social and demographic data about the 292 largest cities in America with populations over 100,000. Sources included the Census, FBI Uniform Crime Reports, Consumer Price Index, EPA, and Google Places API. I won’t explain here exactly how I used these sources to make the constructs above (from manifest variables like number of art galleries per capita, median home value, per capita murders, percent population below poverty level, etc.). But I’ll put the data and gory details on Github. I used the PLS Path Modeling package in R for the analyses.

The livability construct is the most crucial. For this, I gathered 18 different livability rankings: mostly aforementioned articles from my Facebook feed, plus rankings from websites dedicated to the subject (AreaVibes and Livability.com), along with U.S. cities ranking in the well-established EIU and Mercer global quality of life rankings. I also included ratings from the 2013 Gallup-Healthways State of American Well-Being Report.

Types of Livability

In order for a latent construct to be considered valid, the manifest variables used to create it need to correlate. The correlation shouldn’t be perfect of course — otherwise there’s no value in the latent construct — but they should more or less “point in the same direction” so to speak. To get a sense of how these 18 livability rankings correlate with each other, I started with a principal component analysis, which is illustrated here graphically:

The first thing to notice is that a recent happiest and unhappiest cities ranking is unrelated — even opposite — to all of the others; it’s the only one that points to the left. So either the study was bunk (e.g., because the happy southerners were too hospitable to be honest), or a person’s happiness has little to do with the well-being that their home city affords. As the authors of the study put it:

Our research indicates that people care about more than happiness alone, so other factors may encourage them to stay in a city despite their unhappiness. This means that researchers and policy-makers should not consider an increase in reported happiness as an overriding objective.

The second interesting thing is that the remaining rankings all point to the right (the first principal component dimension), but some point up while others point down (the second dimension). So the rankings form two “clusters” which are pretty much independent of each other. Most rankings above the fold come from American websites, reports, and consumer advocacy groups. Rankings below the fold come from international outfits or arts and business oriented groups. Let’s call the first cluster Chill Rankings (i.e., all-American, domestic, down-home), and call the second cluster Jetsetter Rankings (i.e., metro, international, cosmopolitan).

The Chill Model

Now let’s see what the SEM model looks like when we fit it to rankings in the Chill cluster. In truth, I didn’t use all the Chill rankings as manifest variables… I removed one at a time with the lowest factor loading until the livability construct became sufficiently “internally consistent.” More specifically, I ended up using six rankings that achieved Cronbach’s alpha of 0.74, Dillon-Goldstein’s rho of 0.82, and first and second eigenvalues of 2.6 and 0.95, respectively. According to Sanchez (2013), these are acceptable values for construct reliability.

Here is what the SEM model itself looks like once fit to Chill ranking data:

The color and thickness of each arrow indicates the direction and magnitude of influence (green is positive, red is negative). Constructs with significant effects on livability (p < 0.05) have their nodes filled in with color. We can see from this is that the most livable Chill cities are larger but with low per capita crime, have high education levels, good access to health care, and more per capita amenities for arts & leisure (things like art museums, movie theaters, public parks, etc.). However, they have limited public transit options.

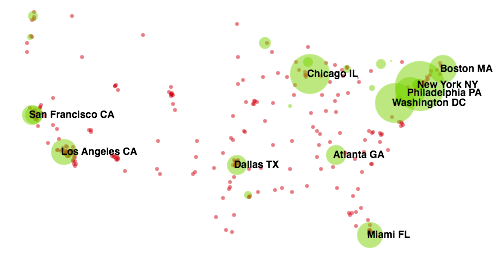

To visualize what kinds of places are described by this model, here is a "livability map" of the most Chill cities, with the top ten labeled. Green circles mean the city is above average (red is below average), and the size of the circle indicates the magnitude of the latent livability score:

(Note: Anchorage and Honolulu are cropped, but are both green of medium size.)

The Jetsetter Model

Now let’s turn to the Jetsetter ranking data. For this livability construct, I used all four rankings in the Jetsetter cluster as manifest variables, achieving alpha 0.77, rho 0.85, and eigenvalues of 2.4 and 0.73 (all suggest excellent reliability). The Jetsetter SEM paints a very different picture of what influences livability:

The most salient features seem to be high crime, good transit, and fewer amenities (per capita). Here is the Jetsetter “livability map” and top ten cities:

(Note: Anchorage and Honolulu are both green but tiny.)

The Kitchen Sink Model

OK, what happens if we just throw all 18 rankings together into a big hot mess? For starters, the livability construct isn’t as internally consistent as the other two (alpha 0.67, rho 0.75, and eigenvalues 3.4 and 2.4, which are all a little suspect). However, an interesting mix of cities emerges. The table below shows the top 25 cities for this hodgepodge ranking, alongside their ranks in the Chill and Jetsetter constructs for comparison.

Cities in the top 25 for all three models are highlighted in green. Having spent some time in about half of these cities, I would say the highlighted ones seem to strike a better balance between down-home comfort, affordability, and culture with metropolitan vibes, history, and urban lifestyle.

| Kitchen Sink Rank |

City and State |

Chill Rank |

Jetsetter Rank |

| 1 | New York NY | 96 | 1 |

| 2 | Washington DC | 18 | 2 |

| 3 | Chicago IL | 39 | 3 |

| 4 | Boston MA | 16 | 5 |

| 5 | Philadelphia PA | 42 | 4 |

| 6 | San Francisco CA | 14 | 9 |

| 7 | Pittsburgh PA | 5 | 22 |

| 8 | Minneapolis MN | 2 | 14 |

| 9 | Los Angeles CA | 49 | 7 |

| 10 | Seattle WA | 3 | 18 |

| 11 | Madison WI | 1 | 84 |

| 12 | Dallas TX | 40 | 10 |

| 13 | Buffalo NY | 6 | 15 |

| 14 | Miami FL | 134 | 6 |

| 15 | Oakland CA | 30 | 11 |

| 16 | Atlanta GA | 47 | 8 |

| 17 | Honolulu HI | 12 | 17 |

| 18 | Austin TX | 9 | 34 |

| 19 | Lincoln NE | 4 | 75 |

| 20 | Denver CO | 10 | 42 |

| 21 | Lexington KY | 4 | 67 |

| 22 | Saint Paul MN | 7 | 70 |

| 23 | Portland OR | 17 | 24 |

| 24 | Milwaukee WI | 41 | 16 |

| 25 | Houston TX | 24 | 20 |

Conclusions and Caveats

The Chill and Jetsetter rankings are basically unrelated. In the principal component plot up top, the vectors for the two big clusters are pretty much orthogonal. And while the Chill and Jetsetter livability constructs seem weakly correlated at first glance (Pearson’s r = 0.28, p < 0.001), it turns out that this is because Davenport, Oxnard, West Jordan, and a hundred or so other places are at the bottom of both lists (in part due to a missing data problem discussed below). When you filter out the cities that simply bottom-out in both rankings, the correlation goes away (r = -0.01, p = 0.91).

If I were to devise my own livability equation, it would probably look more like the Chill model. But when you run the numbers, its predictions are only moderately correlated with its own livability construct (r = 0.69), while predictions from the Jetsetter model are much better (r = 0.83) and the Kitchen Sink model’s are better still (r = 0.85). So while “Chill livability” makes more intuitive sense (at least to me), its influences may be more subtle for the nine constructs I used here. (Originally, I did try to include other constructs like diversity, disease, and climate, but I couldn’t create internally consistent constructs from the census and weather data I used as manifest variables.)

Sample bias. While I did not hand-pick the rankings used for the Chill Model, it is a little suspicious that three of its top ten cities are places I’ve lived (Lexington, where I grew up; Madison, where I spent 9 years in and out of grad school; and Pittsburgh, where I live now). I consider all these places to be quite livable, and even remember them being named “most livable” by some measure or another while I lived in them. But it’s also possible that the set of rankings I started out with was biased: they mostly came from my Facebook feed, and most of my friends live in these places!

Missing data. Confession: the majority of these livability rankings simply list the “top ten” (or 50, or 100) places. So most of the manifest variables I could use for the livability construct had missing values for some of the 292 cities. My way of dealing with this was to invert the ranks (i.e., the city ranked #1 in a top ten list had value 10 since it is “more” livable; rank #10 had value 1, which is “less” livable, etc.), and any cities missing from a particular list had value zero. This approach was probably unfair to places like Boulder, which seems quite livable but just didn’t crack any top ten lists.

The puzzling influence of size. One of the most surprising results is the weight of the size construct in both models (created from three manifest variables: 2010 and 2013 populations as well as land area in square km). It’s odd that size has a positive weight in the Chill model and a negative weight in the Jetsetter model, when the latter cities are in fact much larger. The top 25 Chill cities have population 601,900 on average, compared to 1,163,000 in Jetsetter cities.

My guess is that size interacts weirdly with crime (which is a superlinear function of size) and with arts/leisure (which is negatively correlated with size: r = -0.43, p < 0.001). The crime and arts/leisure constructs are normalized per capita, so the true effects of size might be masked by some sort of “tie-breaking.” For example, Madison and Chandler are similarly large, but Madison is more Chill, with less crime and more arts/leisure to offer. Likewise, Indianapolis and Washington DC have similar crime rates, but Washington is more Jetsetter even though it is smaller. I tried adding more links to the SEM graph to start modeling some of these interactions, but the weights didn’t change much and the overall goodness-of-fit actually degraded. Since I’m an SEM newbie, I gave up for now but there is probably more to explore here.

Cost of living and pollution. It is also interesting that these two constructs, which seem like reasonable influences on livability, have essentially no effect according to either the Chill or Jetsetter model. Perhaps, like “happiness,” they ultimately aren’t major considerations when weighed alongside everything else that can impact quality of life in America.

Hi there, Burr! Just ran across your site (I’m an aspiring data scientist, so read about active learning a bit) and accidentally saw this post. What a coincidence – just recently I’ve finished working on my Ph.D. dissertation (to be defended soon), which uses SEM as main method and ‘plspm’ R package for PLS-SEM – it’s a small world, isn’t it? In my study I also use open data, but of different nature (open source repositories; also have tried startup ecosystem open data). Unfortunately, I don’t see any promised information on this project of yours on GitHub – have you deleted it or haven’t had a chance to publish? My dissertation research software project is there, in case you’re curious (it’s not documented perfectly at the moment, though). Feel free to connect with me online. Best wishes, Aleksandr (Alex).

Forgot to mention that I liked this post very much – nice analysis and reporting!

Hi Burr,

Thanks for the post: you did an amazing job!

2 facts:

– I am working to take over as Data Analyst, having a background in social sciences with some data analysis working experiences (now attending online DA courses).

– I moved to Vancouver, BC, (1 of the most liveable cities in the world !?) from Italy 3 years ago.

Well, I had the intention to start digging the web to find any source of data for these well-known liveability researches, which I highly doubt; and your post is just enlightening.

I am going to investigate further on any proxy variable which might be used for the happiness variable, though, which this city seems to miss 🙂

Thanks!